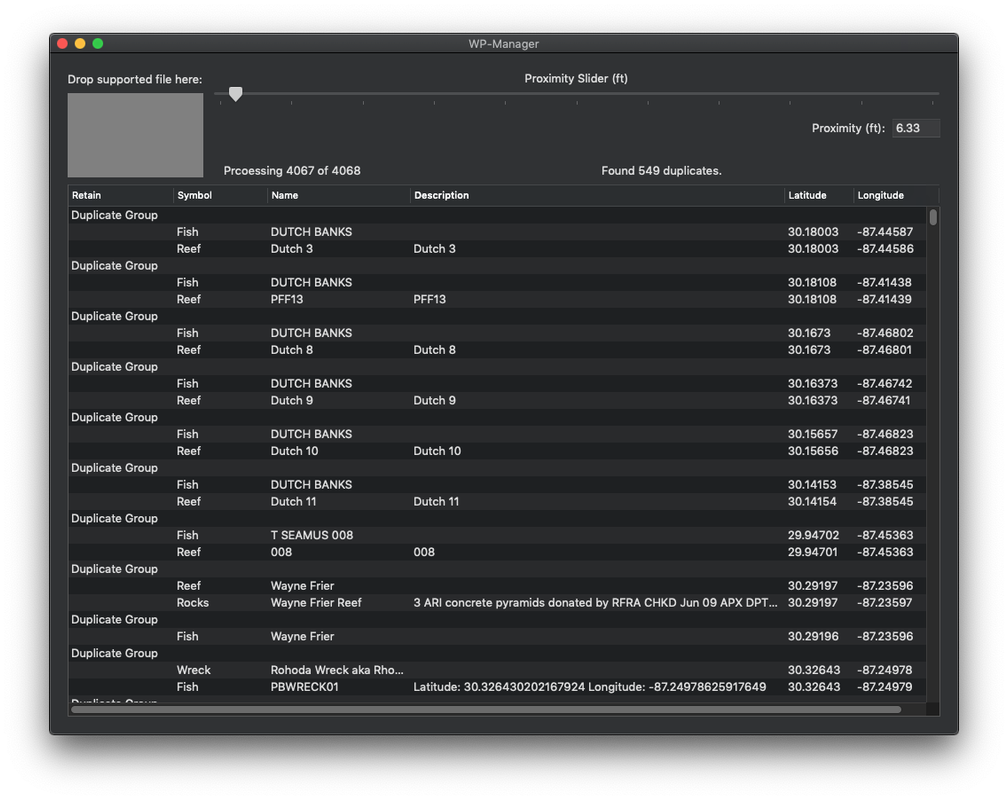

The schemas were parsed and converted to Avro with a Python library created in 2007. Tab-separated values (TSV): All data events were sent as TSV strings. That meant we had to stick with some historical design choices that we would not have if we had built this EDI from scratch. A key decision we took to make this happen was to stay backwards compatible to minimize the migration time. Backwards compatible? Or stuck in the past?įor strategic reasons, it was critical, in 2016, that we build the EDI in GCP and migrate over as quickly as possible. This led to gaps in what was instrumented, and a data-quality nightmare. Some other teams would shoehorn their data into existing data events. Since this process was so painful, some teams tried to instrument their features or services, but then gave up. This was an eternity in terms of iteration time. Due to some tech debt, it took a few hours to propagate the schemas for this validation. For example, the receiver service, which is the entry point of the infrastructure, uses the schemas to validate that incoming data is well formed. One issue was that multiple components in the EDI had to be schema aware. Under normal circumstances it would take a customer a week to go through this workflow and get their data. The workflow for a customer to progress from “instrumentation to insights” took far too long. While we had some specific client code and algorithms to reliably deliver business-critical data exactly once, it was not done in a way that we could extend to all 600+ event types that we had at that time. Furthermore, this problem is compounded for datasets generated from a combination of multiple event types in order to “connect the dots” in user journeys where, for example, a single lost event can compromise the whole journey. This leads to a small percentage of data loss for nearly all the data we collect, which is not acceptable for some types of data. For example, if we detect that we are missing a data point, we don’t necessarily know if it is actually lost, or just has not arrived yet due to the user being offline, in a tunnel, or maybe having a flaky network connection. This might seem surprising, but because end users can enjoy Spotify while offline, there are some complications around deduplication of data that is re-sent. Most events generated on mobile clients were sent in a fire-and-forget fashion. During three years of operating and scaling the existing EDI, we gathered a lot of feedback from our internal users and learned a lot about our limitations. As other use cases started to appear, the assumptions we made when building the system had to be revisited. When we designed and built the initial EDI, our team had the mission statement to “provide infrastructure for teams at Spotify to reliably collect data, and make it available, safely and efficiently.” The use cases we focused on were well supported, such as music streaming and application monitoring. Now our incomplete and low-quality data was degrading the productivity of the Spotify data community. Our internal users had feature requests and needed more from the system. However, with that high adoption and traffic increase we discovered some bottlenecks. This increased the total volume of data which we ingested daily to nearly 70TB! (Figure 1).įigure 1: Average total volume (TB) of events stored daily by our ETL process (after compression). The peak traffic increased from 1.5M events per second to nearly 8M, and we were ready for that massive scale increase. We also improved operational stability and the quality of life of our on-call engineers.

We then extended it to adapt to the General Data Protection Regulation (GDPR), we introduced streaming event delivery in addition to batch, and we brought BigQuery to our data community. Our design was optimized to make it quick and easy for internal developers to instrument and log the data they needed. Not everything went as planned, and we wrote about our learnings from operating our cloud-native EDI in Part IV. In 2016, we redesigned the EDI in Google Cloud Platform (GCP) when Spotify migrated to the cloud, and we documented the journey in three blog posts ( Part I, Part II, and Part III). Throughout this blog post we make a distinction between the internal users of the EDI, who are Spotify Engineers, Data Scientists, PMs and squads, and end users, who use Spotify as a service and audio platform. We instrument and log data across every surface that is running Spotify code through a system called the Event Delivery Infrastructure (EDI).

We log a variety of data, from listening history, to results of A/B testing, to page load times so we can analyze and improve the Spotify service.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed